STACKx Cybersecurity 2026

Programme Outline

*Please note that the programme may be subject to change without prior notice.

The full speech is available at https://www.mddi.gov.sg/newsroom/opening-address-by-sms-tan-kiat-how-at-stack-x-cybersecurity-conference-2026/.

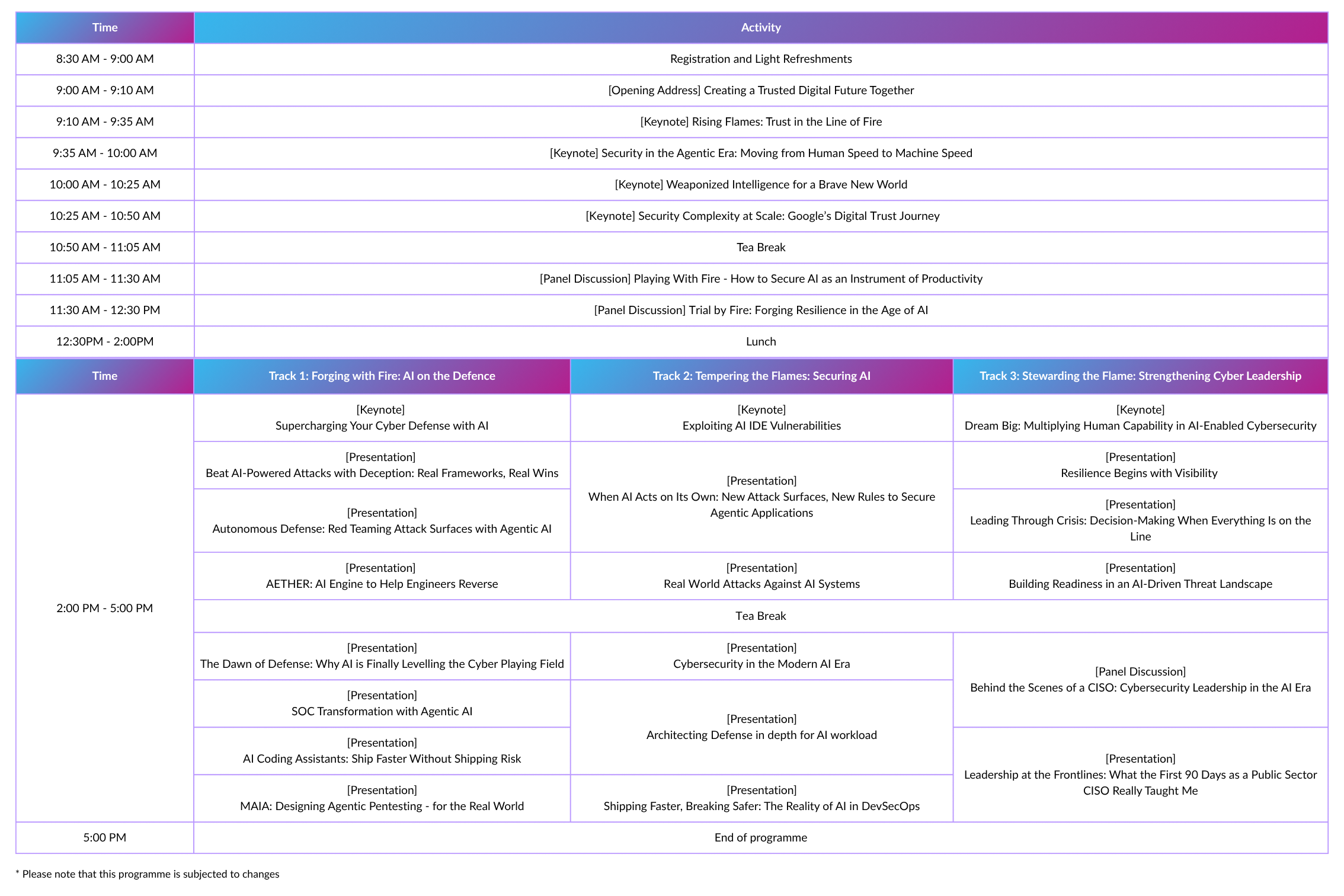

Mr TAN Kiat How

In the past decade, the Singapore government has transformed its service delivery model, moving from paper forms and manual processes to seamless digital services. But rapid digitalisation has come at a cost. The government’s digital attack surface has exploded, expanding from a few centralised websites and gateways to a sprawling, inter-dependent ecosystem of apps, APIs and SaaS platforms. Now, AI is making cybersecurity even harder, as it brings new risks and challenges that we’re still learning to navigate. In this keynote, we will explore concrete examples of how GovTech has strengthened the government’s cybersecurity posture in the past decade. We will also examine some of the salient risks of AI and share what GovTech has been doing to mitigate them. Ultimately, no single organisation has all the answers. The only way forward is to work together, embrace uncertainty, and invest in our people. Only then can we continue to uphold digital trust and harness the flames of AI for good.

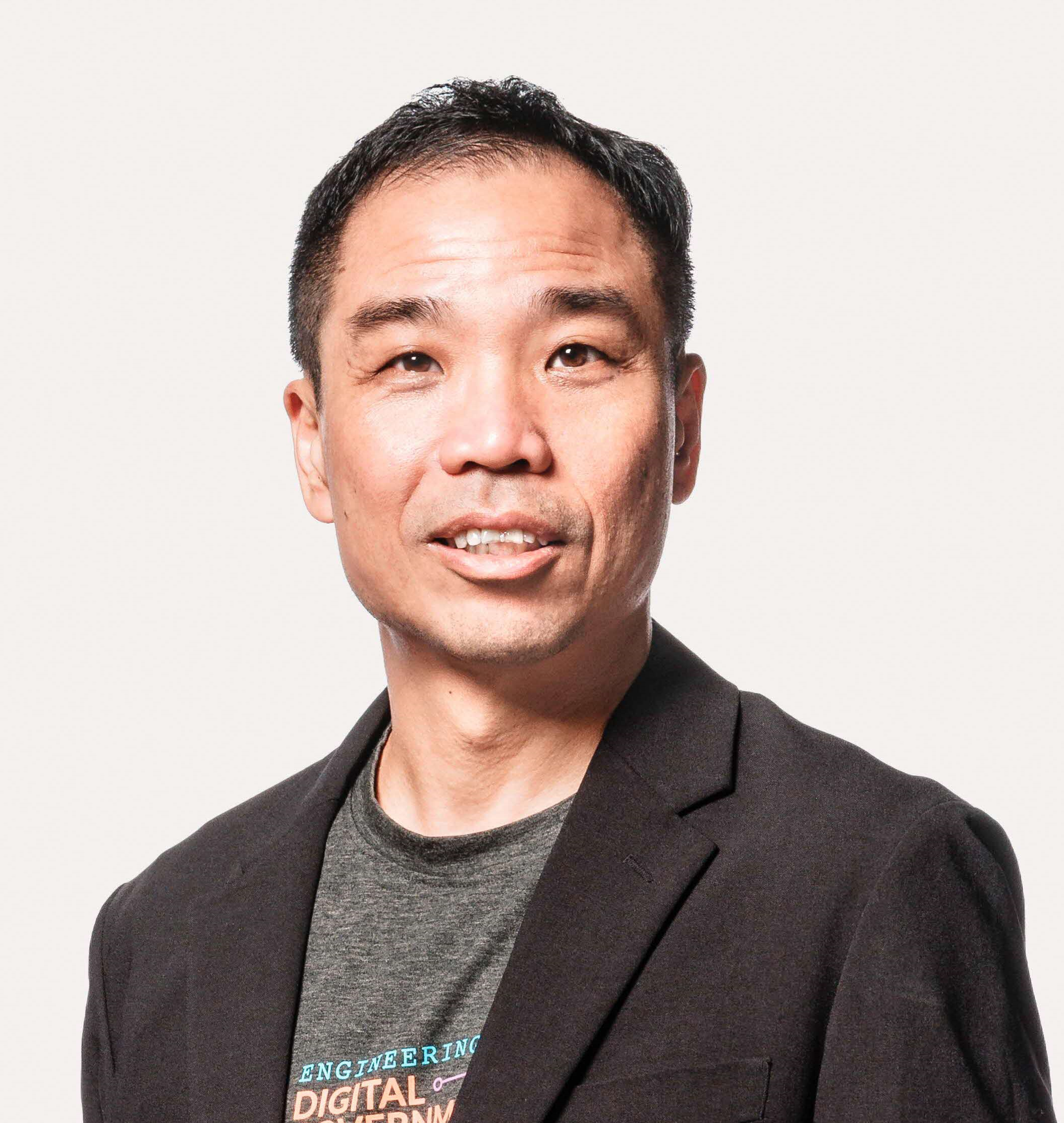

Mr GOH Wei Boon

Government Chief Digital Technology Officer, Ministry of Digital Development and InformationChief Executive, GovTech SingaporeCrowdStrike CISO Adam Zoller will provide insights into how the threat landscape has evolved over the past year, including how adversaries are growing in complexity and scale, shifting their tradecraft to exploit trusted systems like legitimate software, moving away from malware and increasingly leveraging human-driven attacks. He’ll share CrowdStrike data on rapidly-narrowing breakout times, the most common ways adversaries are using AI to accelerate their operations, and why identity is becoming the new perimeter—in addition to regional and strategic shifts in adversary activity. Finally, he’ll offer actionable recommendations for how organizations can shift from defending at human speed, to defending at machine speed.

Mr Adam ZOLLER

Chief Information Security Officer, CrowdStrikeGenerative AI did not just speed up low-skill tradecraft. It changed the offensive operating model. The emerging threat is not an LLM that can generate questionable PowerShell; it is agentic autonomy: tool-using systems that can plan, execute, validate results, recover from failure, and pivot through an environment with minimal human input. We are moving from prompt engineering to agent engineering, and adversaries are already assembling workflows that look less like one-off intrusions and more like continuously running operators with higher throughput, lower cost, and fewer fatigue-driven mistakes. This talk breaks down how attackers operationalize agent-assisted tradecraft across the kill chain, from reconnaissance and environment mapping to privilege discovery, credential access, lateral movement decision-making, and objective pursuit. The focus is not on “AI magic,” but on what makes these systems dangerous in practice: reliability under friction. Real environments are messy. Tools fail, permissions are partial, telemetry is noisy, and assumptions break constantly. The most operationally relevant agents are not the ones that sound clever; they are the ones that can iterate, debug, and adapt without burning the operation or announcing themselves in logs. For red teams, the question is no longer “Can an LLM write an exploit?” It is “Can an agent repeatedly achieve objectives, recover safely from failure, and stay inside scope without creative overreach?” The session presents a practical approach to agent evaluation and governance, including an assessment harness to measure task success rates, drift, error modes, and regressions over time. Attendees will learn how to design bounded exercises with defensible auditability and how to avoid the common failure modes that turn agent experiments into unreliable theater. We then pivot to detection and response. Instead of brittle text-based “AI detectors,” we will identify telemetry-native opportunities to spot agent-driven operations: tool invocation patterns, endpoint process-tree semantics, network sequencing, and timing characteristics that differ from human-led workflows. The goal is to give practitioners a concrete framework to safely red team agentic workflows and to illuminate measurable detection surfaces before autonomous tradecraft becomes routine. Key Takeaways 1. Understand how attacker agent workflows are structured for throughput and adaptation, and translate them into safe, scoped red team exercises with auditability. 2. Build an evaluation harness to quantify reliability and regression risk using measurable outcomes: success rates, drift, and error modes. 3. Identify detection opportunities for agent-driven activity using endpoint and network telemetry patterns, not content classifiers.

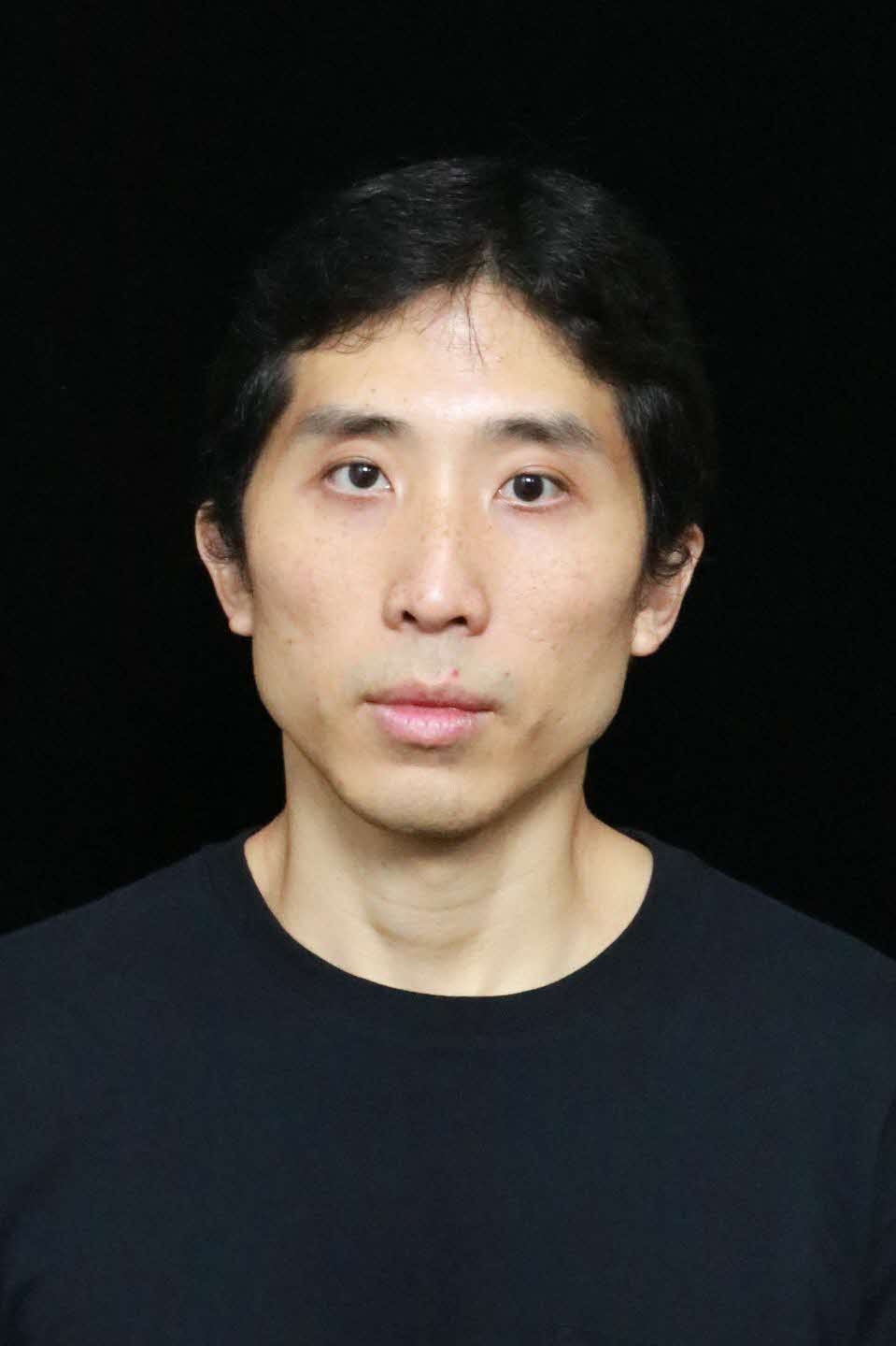

Mr Aamir LAKHANI

Global Director of Threat Intelligence, FortinetDefending hyperscale infrastructure requires more than reactive measures; it demands AI-driven precision. This keynote for the 2026 STACKx Cybersecurity Conference explores Google’s mission to protect billions of users by analyzing trillions of daily signals across the world's largest private network. Moving beyond traditional defense, Google utilizes proactive threat disruption tools like Project Big Sleep and CodeMender to uncover vulnerabilities before they can be exploited. As adversaries evolve in speed and complexity, Google leverages advanced AI and automation to redefine security at scale.

Mr Steve HAGER

Director (Office of the CISO), Google CloudIn the pursuit of hyper-efficiency, AI is growing into the core-engine of industrial-scale productivity. However, in this pursuit, as AI agents evolve from simple tools into autonomous identities, the traditional security perimeter has also started eroding away. This candid conversation between Google CISO Director Steve Hager and DCE Sau Sheong tackles the challenge of securing a world where nearly 25% of code is machine-generated. We explore the transition from prevention to survival, investigating how to implement chaos engineering for AI and establish kill switches for autonomous systems. If prevention eventually fails at machine speed, what does it take to be truly fireproof? Discover the technical anchors required to move past paralysis and build a posture of practical resilience that can withstand security incidents on different scales.

Mr CHANG Sau Sheong

Chief Technology Officer and Deputy Chief Executive (Products), GovTech SingaporeMr Steve HAGER

Director (Office of the CISO), Google CloudIn an era where digital threats move at the speed of thought, traditional defences are no longer enough to hold the line. This panel brings together elite security leaders from national infrastructure, finance, and security providers to dissect the ground truth of modern cyber offence and defence. We move beyond the buzzwords to explore the bottlenecks that leave sectors vulnerable and identify where AI provides a genuine defensive edge versus where it is mere smoke and mirrors. From the friction of legacy constraints to the necessity of public-private synergy, join us for a deep dive into how the world’s most critical sectors are re-engineering their foundations to survive an increasingly volatile landscape and how elite security leaders are prioritizing objectives and investing resources to adapt to these changes.

Dr Alissa ABDULLAH

Deputy Chief Security Officer, Mastercard

Mr Ian LIM

Head Regional CXO Advisor, CiscoMr ONG Kok Wee

Assistant Chief Executive, Cybersecurity Agency of Singapore

Mr WONG Pei Yuen

Group Chief Information Security Officer, Singtel GroupMr Volker RATH

Field Chief Information Security Officer, Cloudflare

Dr Adrian TANG

We are entering into an era where AI agents are not just making existing work faster but they are now a new workforce of co-workers that dramatically expand what organizations can accomplish in their business. The sky is the limit, and security teams now holds the key to enable organisations to unlock the full potential of the agentic workforce with security and trust. To unleash the vast potential of agentic AI, organisations need to address and harness AI to secure the agentic workforce as follows (1) establishing trust before AI agents get to work (2) applying AI defense to safeguard the agentic workforce, and (3) empowering the SOC to detect and respond to AI incidents at machine speed and scale.

Mr Dave WEST

Senior Vice President (Global Specialists), CiscoIn this session, Gal will walk through recent flaws in AI IDE vendors and show end to end exploitation chains that mix AI driven behavior with classic flaws to reach RCE. He will also call out the most relevant control gaps and give developers and defenders concrete steps they can apply.

Mr Gal ZROR

Research Director, CyberArk, A Palo Alto Networks CompanyAs AI transforms from experimental pilots to core business operations, cybersecurity faces a fundamental shift. Threats now move at machine speed, yet many organisations still operate with governance processes designed for a slower era. This keynote explores how security leaders must redesign their approach—not by slowing AI down, but by building governance that matches the new pace. Chief Government CISO Justiin Ang examines the evolution from human-only operations to human-machine teaming, addressing critical questions about workforce development, institutional knowledge preservation, and decision-making at scale. Rather than viewing AI as a replacement threat, he presents a vision where technology multiplies human capability—enabling teams to achieve exponentially more while maintaining accountability and judgment. Drawing from Singapore's experience developing AI-assisted security tools, this session offers practical insights on building trustworthy automation, preserving expertise, and preparing cyber professionals for an AI-enabled future where speed becomes the battlefield.

Mr Justiin ANG

Government Chief Information Security Officer & Assistant Chief Executive (Cybersecurity), GovTech SingaporeAssistant Chief Executive (Development), Cybersecurity Agency of SingaporeAttackers aren’t just getting better, they’re getting faster, using AI and automation to scout, adapt, and strike at scale. Meanwhile, defenders are expected to protect the business without slowing it down, and to prove security investments actually reduce risk. In this session, we’ll cut through the noise and focus on genuine changes in the threat landscape. We'll show how deception done thoughtfully, can give defenders earlier, higher-confidence detection of advanced threats. We’ll tie deception to familiar models like MITRE ATT&CK and the Kill Chain, walk through practical scenarios and case studies, and share the lessons learned: start with the threats you actually see, build believable environments, and use GenAI lures to create tripwires attackers can’t resist.

Mr Lee DOLSEN

Vice President (Product Management), ZscalerThis presentation explores how cyber risk landscapes are becoming more complex as AI, expanding attack surfaces, and growing third-party dependencies increase organisational exposure. Using Singapore-specific analysis, the presentation highlights three core themes: concentration risk among a small number of key providers, gaps in vendor monitoring, and the outsized threat posed by unmonitored critical suppliers. It argues that traditional breach prevention is no longer enough. Instead, organisations need resilience, the ability to anticipate, withstand, and recover from cyber disruption. The session will equip leaders with a practical, intelligence-led framework for managing cyber risk, moving beyond prevention toward continuous visibility, better prioritisation, and stronger enterprise resilience.

Mr Stephen BOYER

Co-Founder and Chief Innovation Officer, BitsightAs public agencies scale up their use of AI beyond traditional software solutions, the security challenge is keeping up with the adoption of AI and agentic powered applications. We’re now dealing with autonomous AI agents that gather information, interpret context, and execute decisions on their own. In this new paradigm, external data effectively becomes “live input” that can influence an agent’s behavior, exposing the organisation to entirely new attack vectors and risks that current security strategies are not designed to handle.

Mr Rob PARRISH

Head of Product (AI Agent Security), Check Point SoftwareModern cloud environments present exponentially complex attack surfaces that traditional security approaches struggle to defend. This session explores how agentic AI transforms cloud security through autonomous red, blue, and green team operations working in parallel. We will discuss how AI-powered red team agents conduct comprehensive risk assessments and attack path analysis to proactively test defenses before adversaries exploit them. Blue team agents enrich security events with contextual intelligence for high-fidelity threat detection and automated investigation workflows. Green team agents drive remediation at scale by continuously improving security at the source. Drawing from Wiz's cloud security expertise, we will examine how External Attack Surface Management (EASM) integrates with agentic AI to secure today's cloud assets while safeguarding the AI workloads and pipelines of tomorrow. Attendees will gain insights into autonomous security operations that break engineering-security silos, enabling defense-in-depth for cloud-native environments.

Mr Matthew ZWOLENSKI

Senior Director (Asia-Pacific Japan), WizWhen a cyber crisis unfolds, leaders must act quickly in an environment where information is incomplete, time is limited, and the consequences are significant. High-stakes decisions must be made as situations evolve rapidly, requiring leaders to balance speed with accuracy while determining the right course of action without having the full picture. Effective crisis leadership extends beyond technical response. Leaders must coordinate people and processes by aligning technical teams, engaging senior stakeholders, managing communications, and maintaining organisational trust while response efforts are underway. At the same time, they must sustain control and credibility by projecting calm, setting clear priorities, and ensuring critical services continue to operate despite disruption. As organisations increasingly rely on AI to support detection and response, leaders must also understand how it shapes crisis management. While AI can enhance speed and visibility during incidents, it can also introduce new risks if relied upon without sufficient governance and oversight.

Mr Bryce BOLAND

Head of Security SA (Asia-Pacific Japan), Amazon Web ServicesAs the volume and sophistication of malware continue to rise, traditional reverse engineering methods struggle to keep pace. With the recent advancement of LLM's capabilities, we started exploring the application of LLM in reverse engineering. This talk shares our journey of developing our in-house AI assisted reverse engineering tool, AETHER, designed to automate routine analysis tasks.

Mr ANG Guang Yao

Cybersecurity Researcher (Malware), Centre for Strategic Infocomm TechnologiesMr KAN Onn Kit

Cyber Specialist (Defence Cyber Command), Digital and Intelligence ServiceIn this talk, we'll explore the most prevalent attack vectors and real-world threats targeting AI-enabled systems. Drawing from hands-on experience in both attacking and defending enterprise AI applications, you'll gain visibility into common misconfigurations and vulnerabilities. This will help you evaluate your own attack surface and build more secure AI systems.

Mr Brian CHAMBERLAIN

Manager, CrowdStrikeCyber readiness is no longer defined solely by how well organisations secure infrastructure and applications. As AI becomes embedded across digital environments, it introduces a new attack surface spanning models, data pipelines, agent frameworks, and integrated systems. Adversaries can exploit weaknesses such as prompt injection, data poisoning, model manipulation, and agent misuse. Securing AI therefore requires continuous testing, strong governance over data and models, and safeguards to monitor how AI systems behave in production. At the same time, AI is transforming both offensive and defensive cyber operations. Attackers can accelerate reconnaissance, automate social engineering, and scale vulnerability discovery, while defenders can use AI to triage alerts, analyse telemetry, simulate adversarial behaviour, and support investigations. Organisations that operationalise AI effectively while maintaining strong human oversight will hold a significant advantage. This shift also demands a rethink of cyber capability development. Security teams require continuous, hands-on training that reflects AI-assisted workflows and modern attack techniques. Organisations must also benchmark how AI performs in operational tasks and how effectively it augments human analysts to build resilient cyber teams.

Mr Gerasimos MARKETOS

Chief Product Officer, Hack The BoxFor years, the narrative has been dominated by the "AI-powered attacker". From crafting perfect phishing lures to generating endless malware variants, adversaries are more efficient than ever. But for the defender—already drowning in alerts, unpatched systems, and a chronic talent shortage—is this the end? Not quite. This keynote argues that we are witnessing the true dawn of AI for cyber defense. Sharing raw, "in-the-trenches" insights from GovTech’s internal trials, we move past vendor hype to explore the reality of using AI for both Red and Blue operations. We’ll discuss the friction of early adoption, the challenge of hallucinations, and our recent experiences in using AI for vulnerability discovery and incident triage. While the journey hasn’t been easy, the technology is finally maturing into a reliable force multiplier that allows defenders to finally scale at the speed of the threat.

Mr ONG Hong Joo

Senior Director (Cyber Security Group, Cyber Defence Ops & Intel Division), GovTech SingaporeThe modern AI era is rapidly disrupting cybersecurity, both in how adversaries operate, as well as how defenders operate. Adversaries are adopting AI to perform many of the functions humans did in the past, and agentic AI will transform many aspects of an attack. The cybersecurity industry is going through a sea change moment where everything that previously was used to defend networks is being transformed to improve protections against these new adversaries tactics, techniques, and procedures (TTPs). In this session, we'll cover how adversaries are utilizing AI and how defense is changing to lower your risk of compromise.

Mr Jon CLAY

Vice President (Threat Intelligence, TrendAI), Trend MicroDiscover how Agentic AI is transforming Security Operations Centers with AI-driven, autonomous experiences, dramatically improving threat detection and response efficiency while uncovering novel threats faster.

Mr Denver SPITZ

Regional Security Architect, Google CloudCybersecurity leaders are increasingly operating in environments where AI is embedded across security tools, workflows, and decision processes. By analysing vast volumes of signals and surfacing risks more quickly, AI is helping security teams strengthen threat detection and gain greater confidence in their decisions. But beyond the potential, what's working in practice? This fireside chat offers a behind-the-scenes perspective on how CISOs are using these capabilities in real-world environments. Speakers will share examples of where AI has improved security outcomes, as well as the emerging challenges of securing AI systems themselves—from adversarial attacks to model vulnerabilities that traditional security controls struggle to address. The conversation will also examine the parallel responsibility of managing growing AI dependency in security operations securing the AI systems. As AI becomes integral to threat response and decision-making, leaders must balance its benefits with the risks of over-reliance, ensuring that human judgement, accountability, and strategic control remain firmly in place to secure their system.

Mr Daryl PEREIRA

Director & APAC Head of Office of the CISO, Google Cloud

Mr Tobias GONDROM

Group Chief Information Security Officer, United Overseas Bank

Mr Bernard TAN

This session dives deep into advanced security architectures for AI workloads on AWS, exploring defense strategies against sophisticated attack vectors. Through technical examples, attendees will implement secure architectures covering identity, fine-grained access policies, and foundation model deployment patterns. Learn how to harden generative and agentic AI applications using AWS security capabilities, apply least-privilege controls across every layer of your AI pipeline, and build scalable secure architectures.

Mr KIM Kuok Chiang

Principal Security Advisor, Amazon Web ServicesAI coding assistants are transforming software development by dramatically increasing delivery speed—but as we all seen in the news-they can also amplify security risks such as insecure patterns, dependency vulnerabilities, and review blind spots. In this session, let’s review how companies adopt AI coding assistants safely, without trading speed for risk. We will cover practical examples to prompt AI for secure-by-default code, adapt code review practices for AI-generated changes, and integrate AI assisted security testing such as SAST and secret scanning into CI/CD pipelines. We will cover how threat modeling must evolve for AI-enabled and agentic applications, where tools, data access, and autonomy introduce new attack surfaces. Finally, we will review what policies, repository controls, dependency governance, and auditability settings can be deployed.

Mr Arnaud LHEUREUX

Chief Developer Advisor Asia, MicrosoftGood pentesters don’t just run tools - they think. They map their terrain before striking, understand fundamentally how their targets respond, and carry what they learn from one finding into the next. Today’s AI-powered security tools promise to do all of this. They work on Capture-the-Flag (CTF) boxes. Real websites though? Not so much. We built Multi Agent Infosecurity Assistant (MAIA) to change that - a multi-agent system that mirrors how experienced pentesters work, with the engineering to back it up. In this talk, we pull back the curtain on what it takes to move AI pentesting from demos into sustainable engagements on real websites, and the hard trade-offs between autonomy and safety. A conversation you’ll definitely want to be part of.

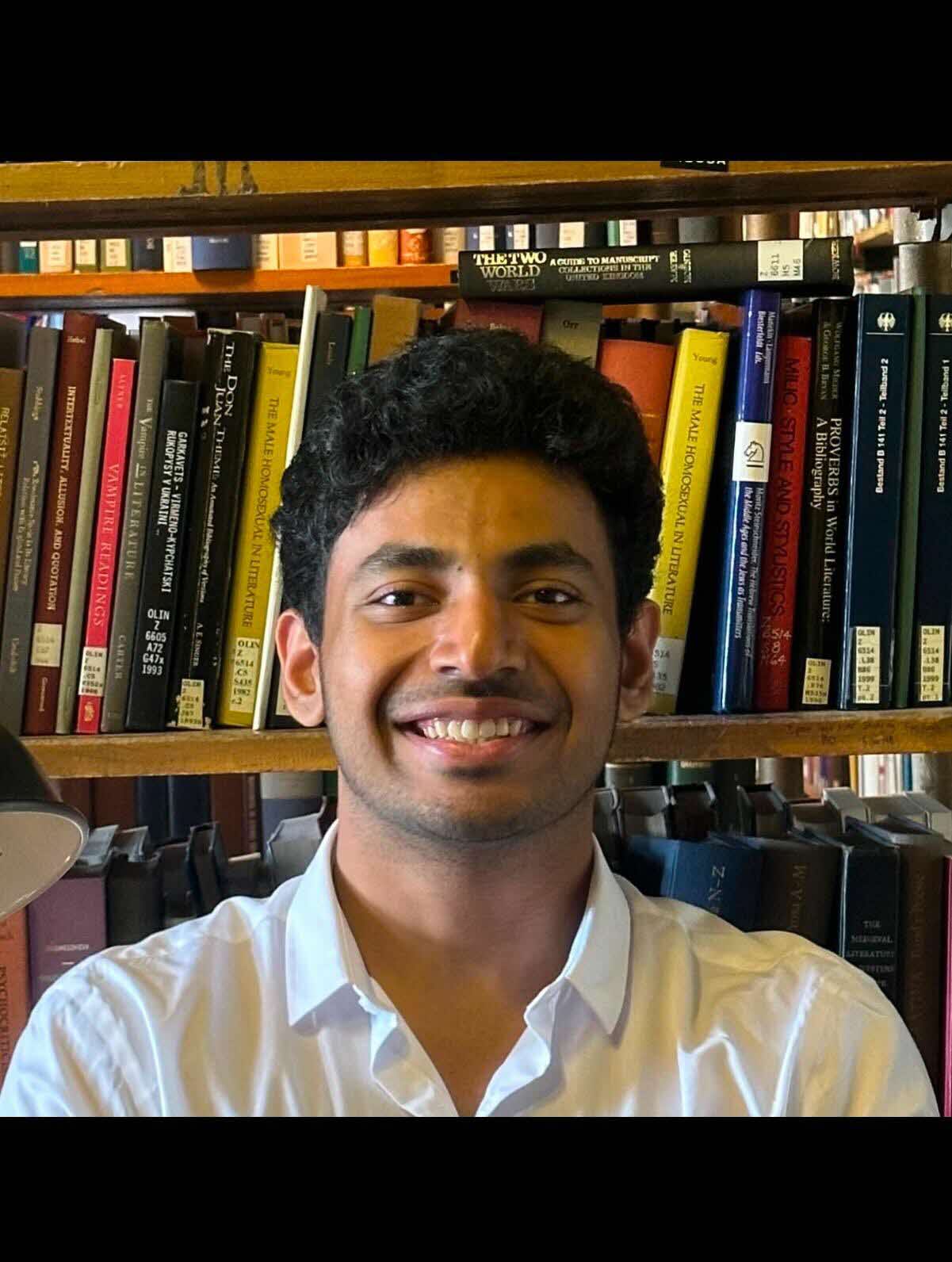

Mr Joe Sunil INCHODIKARAN

Cybersecurity Engineer, GovTech SingaporePaths into cybersecurity leadership are rarely linear. Many leaders develop their perspective by moving across technical, operational, and strategic roles, gaining the breadth of experience required to manage complex security environments. This session reflects on how role mobility within cybersecurity helps professionals build broader judgement and prepare for leadership responsibilities over time. It also examines the realities of stepping into the CISO role, particularly the first 90 days when priorities must be established quickly, organisational dynamics understood, and critical decisions made under uncertainty. Drawing on experience in the public sector, the talk will also explore the unique challenges of managing cyber risk within government systems, where leaders must balance competing priorities, operational constraints, and evolving threats while ensuring that essential public services remain secure and resilient.

Mr Terence TEO

Chief Information Security Officer, Ministry of Sustainability & EnvironmentAI is accelerating how software is built— the speed comes with its own set of risks. In this session, Kelvin and Leon share how SHIP-HATS is driving AI adoption across the Singapore Government Developers, enabling measurable productivity gains while navigating security, legal, cost, and control challenges. They unpack the guardrails, DevSecOps practices, and mindset shifts needed to manage these risks, and explore a key question: can security evolve from reactive enforcement to proactive enablement in an AI-driven world?

Mr Kelvin WIDJAYA

Senior Software Engineer, GovTech Singapore